Home Page

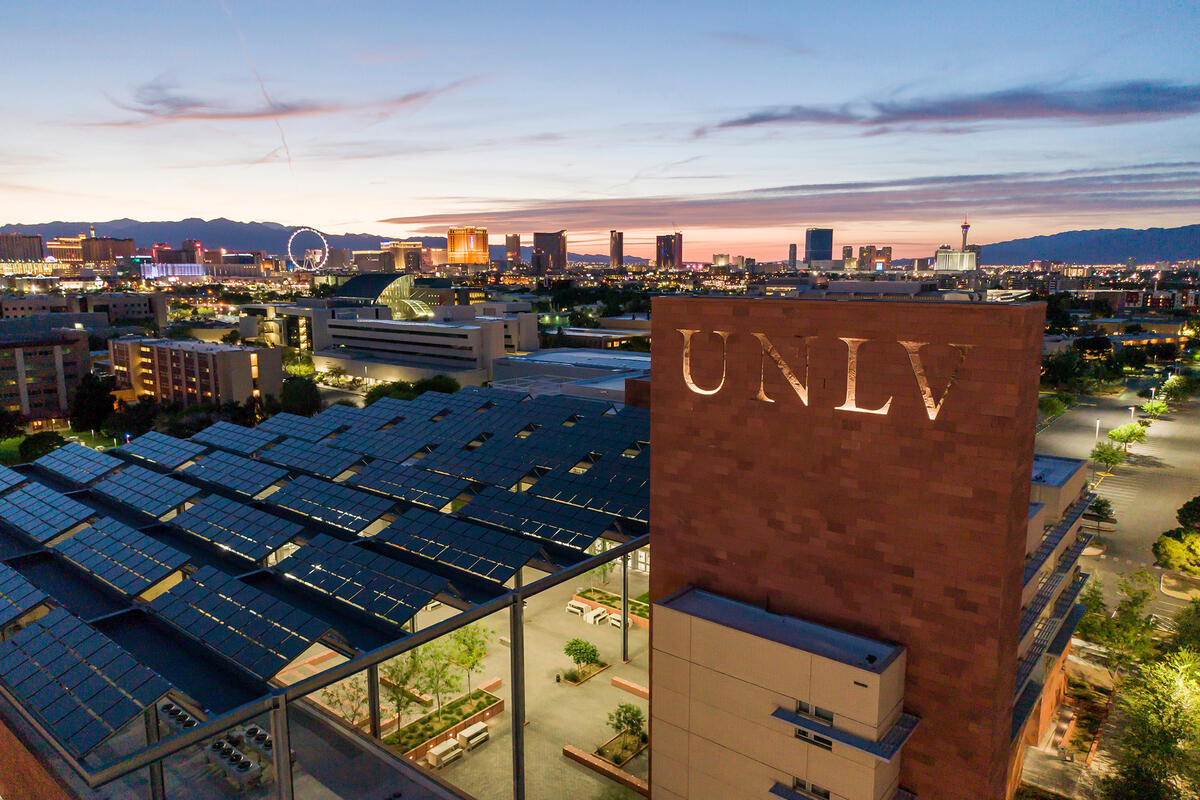

Welcome to my homepage! I am an Assistant Professor in the Department of Computer Science at UNLV. My areas of expertise include computer science education and programming languages. Please feel free to explore the available sections on the left.